this post was submitted on 18 Jul 2024

492 points (98.4% liked)

Memes

45187 readers

1528 users here now

Rules:

- Be civil and nice.

- Try not to excessively repost, as a rule of thumb, wait at least 2 months to do it if you have to.

founded 5 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

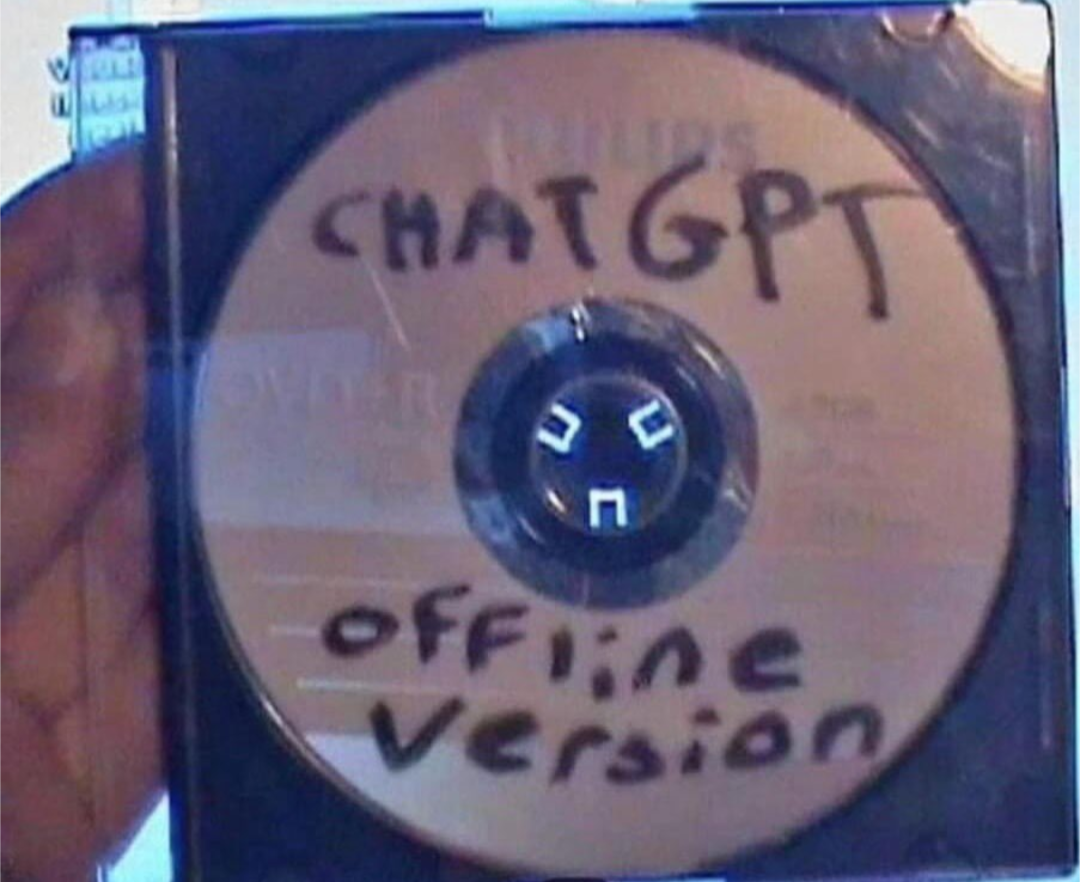

Technically possible with a small enough model to work from. It's going to be pretty shit, but "working".

Now, if we were to go further down in scale, I'm curious how/if a 700MB CD version would work.

Or how many 1.44MB floppies you would need for the actual program and smallest viable model.

Might be a dvd. 70b ollama llm is like 1.5GB. So you could save many models on one dvd.

70b model taking 1.5GB? So 0.02 bit per parameter?

Are you sure you're not thinking of a heavily quantised and compressed 7b model or something? Ollama llama3 70b is 40GB from what i can find, that's a lot of DVDs

Ah yes probably the Smaler version, your right. Still, a very good llm better than gpt 3